-

NVIDIA H100 NVL GPU Highlights

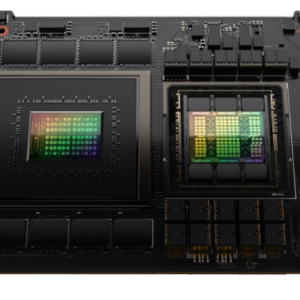

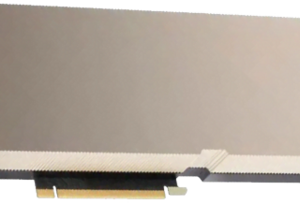

The NVIDIA H100 NVL GPU is a powerhouse, designed for high-performance computing and AI applications, featuring 94GB of HBM3 GPU memory and a bandwidth of 7.8TB/s. It showcases impressive computational capabilities with up to 68 teraFLOPs for FP64 calculations, scaling up to 7,916 teraFLOPs for FP8 Tensor Core operations. This GPU is equipped with 14 NVDEC and 14 JPEG decoders, supporting a wide range of media formats. The thermal design power is configurable between 350-400W, accommodating up to 14 Multi-Instance GPUs (MIGS) at 12GB each, ensuring flexibility and scalability in deployment. It's presented in a dual-slot air-cooled form factor and provides robust connectivity options through NVLink with 600GB/s and PCIe Gen5 with 128GB/s, making it ideal for partner and NVIDIA-certified systems seeking 2-4 pairs. This model also supports the NVIDIA AI Enterprise add-on, enhancing its utility for advanced AI tasks.

NVIDIA H100 NVL GPU 94GB HBM3 for ChatGPT

$37,080.00

✓ FP64: 68 teraFLOPs

✓ FP64 Tensor Core: 134 teraFLOPs

✓ FP32: 134 teraFLOPs

✓ TF32 Tensor Core: 1,979 teraFLOPs

✓ BFLOAT16 Tensor Core: 3,958 teraFLOPs

✓ FP16 Tensor Core: 3,958 teraFLOPs

✓ FP8 Tensor Core: 7,916 teraFLOPs

✓ INT8 Tensor Core: 7,916 TOPS

✓ GPU Memory: 94GB HBM3

✓ GPU Memory Bandwidth: 7.8TB/s

✓ Decoders: 14 NVDEC, 14 JPEG

✓ Max TDP: 2x 350-400W (configurable)

✓ Multi-Instance GPUs (MIGS): Up to 14 MIGS @ 12GB each

✓ Form Factor: Dual-slot air-cooled

✓ Interconnect: NVLink: 600GB/s, PCIe Gen5: 128GB/s

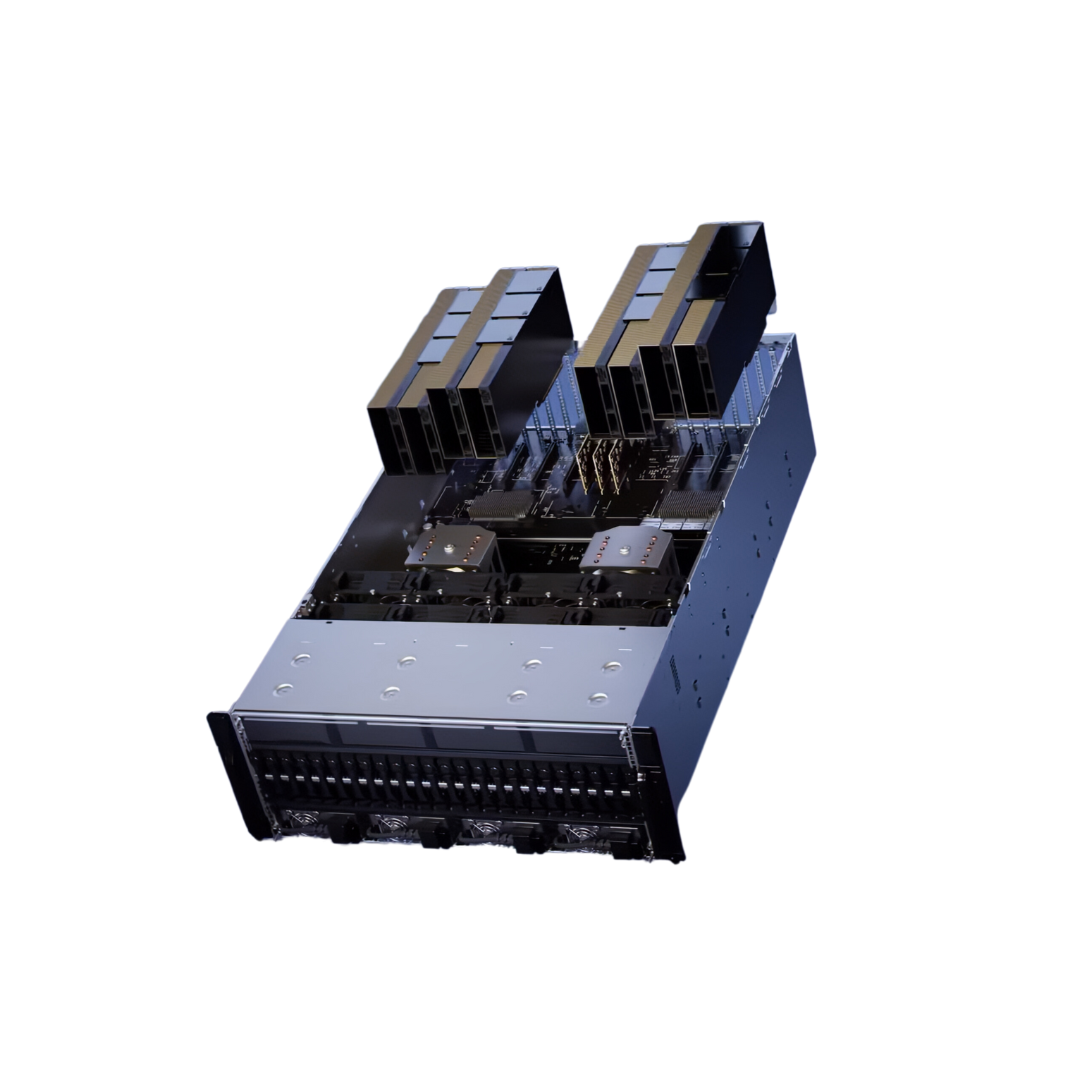

✓ Server Options: Partner and NVIDIA-Certified Systems with 2-4 pairs

✓ NVIDIA AI Enterprise: Add-on

2-3 week Lead Time

| Specification | Details |

|---|---|

| FP64 | 68 teraFLOPs |

| FP64 Tensor Core | 134 teraFLOPs |

| FP32 | 134 teraFLOPs |

| TF32 Tensor Core | 1,979 teraFLOPs’ |

| BFLOAT16 Tensor Core | 3,958 teraFLOPs’ |

| FP16 Tensor Core | 3,958 teraFLOPs’ |

| FP8 Tensor Core | 7,916 teraFLOPs’ |

| INT8 Tensor Core | 7,916 TOPS |

| GPU Memory | 94GB HBM3 |

| GPU Memory Bandwidth | 7.8TB/s |

| Decoders | 14 NVDEC, 14 JPEG |

| Max Thermal Design Power (TDP) | 2x 350-400W (configurable) |

| Multi-Instance GPUs (MIGS) | Up to 14 MIGS @ 12GB each |

| Form Factor | Dual-slot air-cooled |

| Interconnect | NVLink: 600GB/s, PCle Gen5: 128GB/s |

| Server Options | Partner and NVIDIA-Certified Systems with 2-4 pairs |

| NVIDIA AI Enterprise | Add-on |

Download

Only logged in customers who have purchased this product may leave a review.

Reviews

There are no reviews yet.