NVIDIA A100

Unprecedented Acceleration for World’s Highest-Performing Elastic Data Centers

-

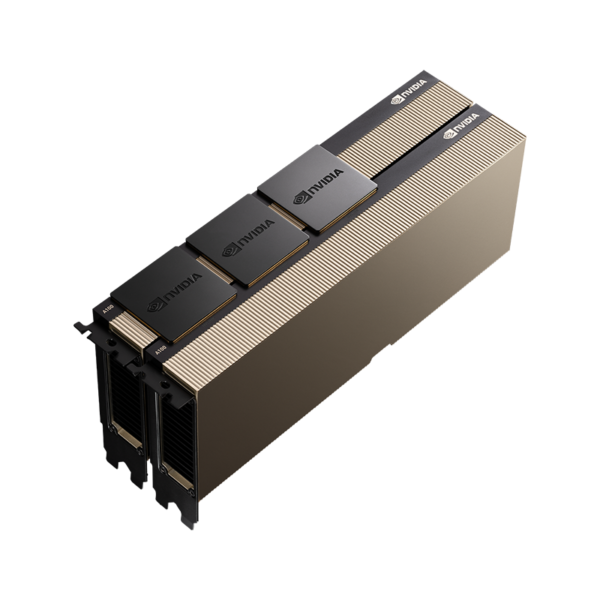

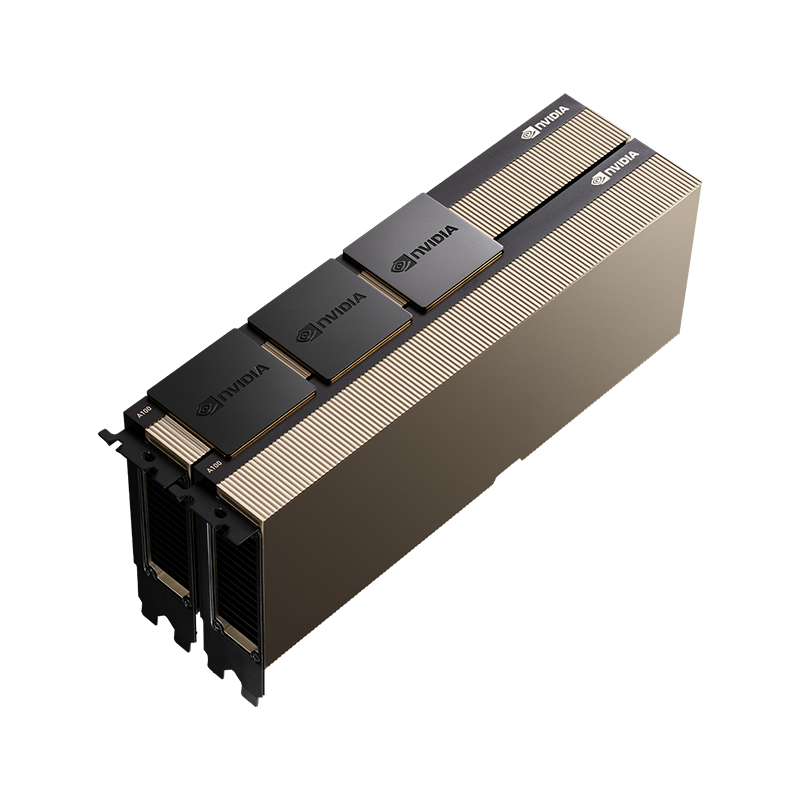

NVIDIA A100

Overview

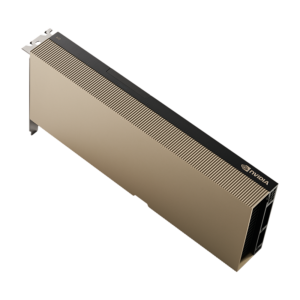

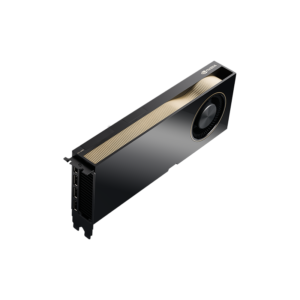

The NVIDIA A100 Tensor Core GPU delivers unprecedented acceleration—at every scale—to power the world’s highest performing elastic data centers for AI, data analytics, and high-performance computing (HPC) applications. As the engine of the NVIDIA data center platform, A100 provides up to 20x higher performance over the prior NVIDIA Volta generation. A100 can efficiently scale up or be partitioned into seven isolated GPU instances, with Multi-Instance GPU (MIG) providing a unified platform that enables elastic data centers to dynamically adjust to shifting workload demands.

-

NVIDIA A100

Overview

A100 is part of the complete NVIDIA data center solution that incorporates building blocks across hardware, networking, software, libraries, and optimized AI models and applications from NGC. Representing the most powerful end-to-end AI and HPC platform for data centers, it allows researchers to deliver real-world results and deploy solutions into production at scale, while allowing IT to optimize the utilization of every available A100 GPU.

-

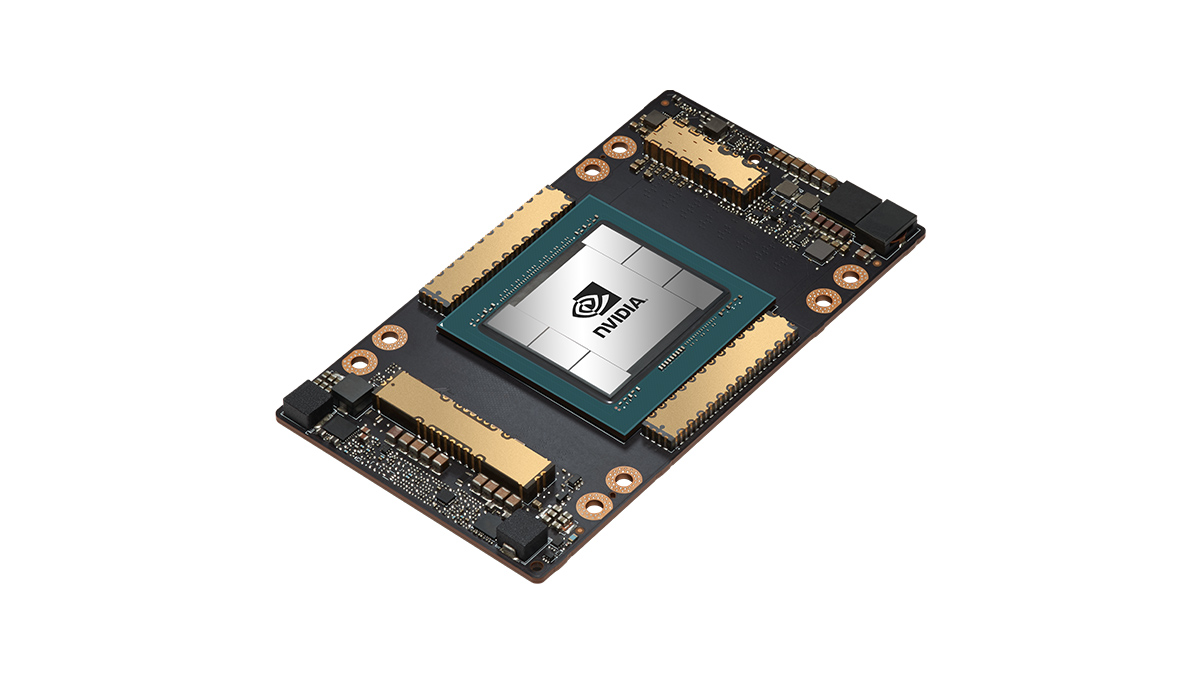

NVIDIA Ampere-Based

Architecture

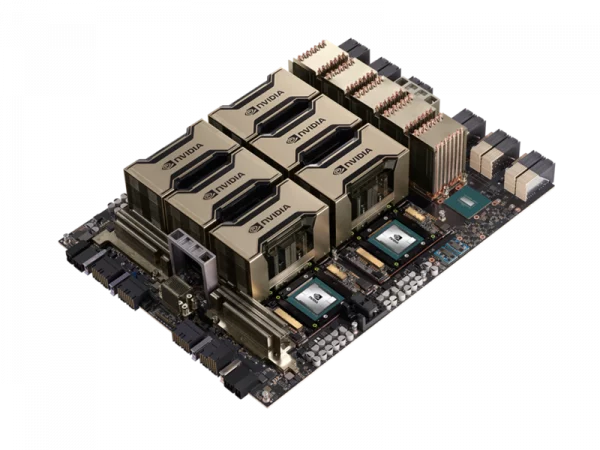

A100 accelerates workloads big and small. Whether using MIG to partition an A100 GPU into smaller instances, or NVLink to connect multiple GPUs to accelerate large-scale workloads, the A100 easily handles different-sized application needs, from the smallest job to the biggest multi-node workload.

-

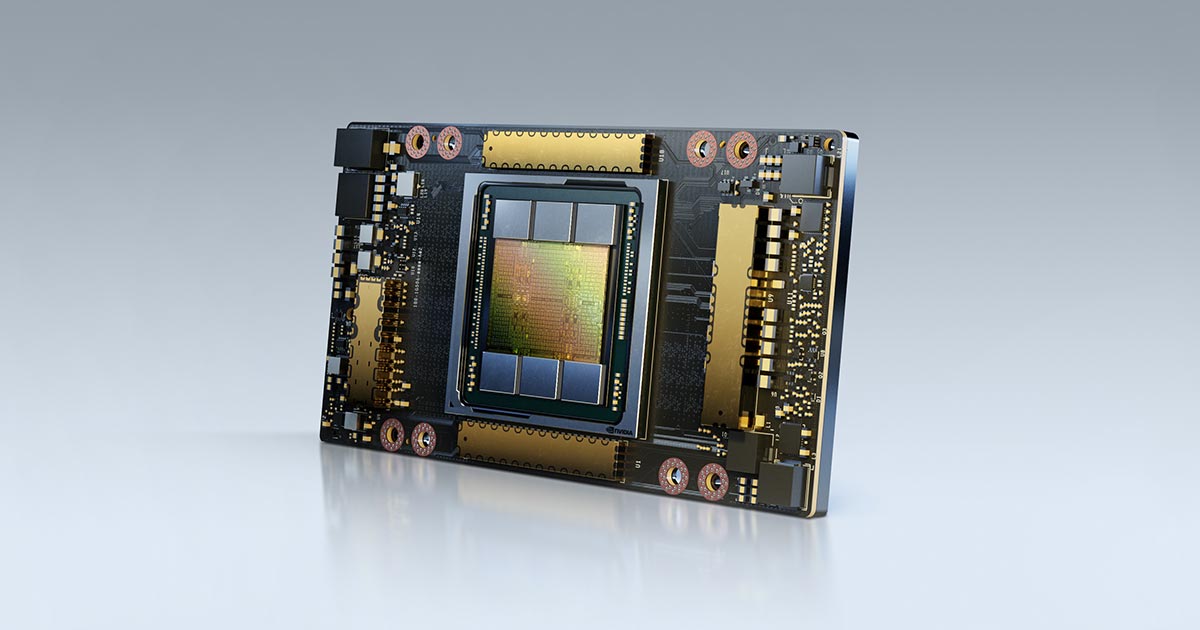

NVIDIA A100

HBM2e

With 40 and 80 gigabytes (GB) of high-bandwidth memory (HBM2e), A100 delivers improved raw bandwidth of 1.6TB/sec, as well as higher dynamic random access memory (DRAM) utilization efficiency at 95 percent. A100 delivers 1.7x higher memory bandwidth over the previous generation.

-

Third-Generation

Tensor Cores

First introduced in the NVIDIA Volta architecture, NVIDIA Tensor Core technology has brought dramatic speedups to AI training and inference operations, bringing down training times from weeks to hours and providing massive acceleration to inference. The NVIDIA Ampere architecture builds upon these innovations by providing up to 20x higher FLOPS for AI. It does so by improving the performance of existing precisions and bringing new precisions—TF32, INT8, and FP64—that accelerate and simplify AI adoption and extend the power of NVIDIA Tensor Cores to HPC.

Sale!

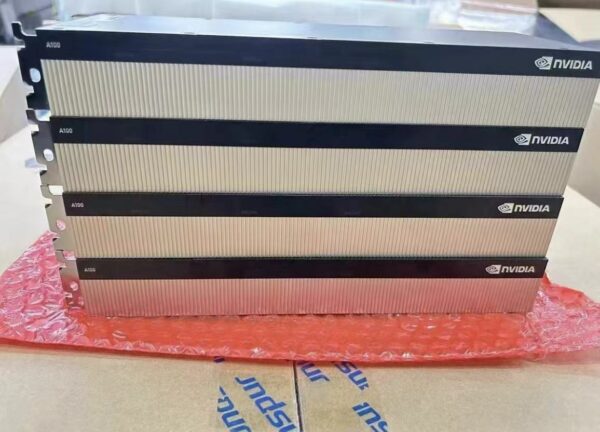

NVIDIA A100 Enterprise PCIe 40GB/80GB

$10,500.00 – $18,500.00

The NVIDIA A100 Tensor Core GPU delivers unprecedented acceleration—at every scale—to power the world’s highest performing elastic data centers for AI, data analytics, and high-performance computing (HPC) applications. 3-year manufacturer warranty included.

Ships in 10 days from payment. All sales final. No cancellations or returns. For volume pricing, consult a live chat agent or all our toll-free number.

Update 12.14.2023: A100 40GB/80GB series is slated to be discontinued in January, 2024. The L40S and H100 are Nvidia’s only comparable alternatives currently.

| CUDA Cores | 6912 |

|---|---|

| Streaming Multiprocessors | 108 |

| Tensor Cores | Gen 3 | 432 |

| GPU Memory | 40 GB or 80 GB HBM2e ECC on by Default |

| Memory Interface | 5120-bit |

| Memory Bandwidth | 1555 GB/s |

| NVLink | 2-Way, 2-Slot, 600 GB/s Bidirectional |

| MIG (Multi-Instance GPU) Support | Yes, up to 7 GPU Instances |

| FP64 | 9.7 TFLOPS |

| FP64 Tensor Core | 19.5 TFLOPS |

| FP32 | 19.5 TFLOPS |

| TF32 Tensor Core | 156 TFLOPS | 312 TFLOPS* |

| BFLOAT16 Tensor Core | 312 TFLOPS | 624 TFLOPS* |

| FP16 Tensor Core | 312 TFLOPS | 624 TFLOPS* |

| INT8 Tensor Core | 624 TOPS | 1248 TOPS* |

| Thermal Solutions | Passive |

| vGPU Support | NVIDIA Virtual Compute Server (vCS) |

| System Interface | PCIE 4.0 x16 |